Published at MetaROR

May 5, 2026

Table of contents

Evaluating the Claim that Preregistration and Registered Reports Restrict Exploratory Research

1 Department of Psychology, University of Minnesota, Minnesota, USA

Originally published on December 12, 2025 at:

Editors

Kathryn Zeiler

Olmo van den Akker

Editorial assessment

by Olmo van den Akker

I much appreciated the detailed explanations for the decisions made in the design, which made it much easier to arrive at an informed assessment. That level of detail also seems to have helped the reviewers provide the elaborate feedback they did.

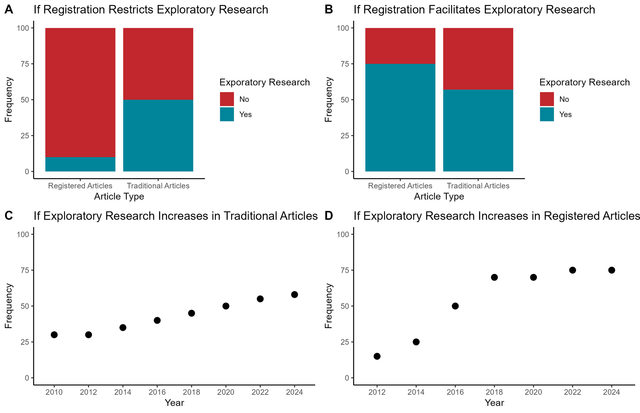

Both reviewers agree that the manuscript takes up an important question, but both also think the current proposal does not yet support the claims it wants to make. In particular, the reviewers think that the study can speak to how exploratory analyses are reported or labeled in published papers, but not to whether preregistration and Registered Reports actually reduce exploratory research itself, or diminish creativity, novelty, and serendipity. They also raise related methodological concerns: the definition of exploratory research is still too loose for reliable coding, the coding plan and statistical analyses are not yet sufficiently specified, and some design choices (coding at the article rather than study level, ignoring differences in preregistration quality, and not accounting for journal-specific reporting requirements) make the eventual interpretation of the results difficult.

The reviewers are largely aligned on these central points. However, reviewer 1 puts more weight on the broader framing of the debate and on the possibility that the study is really picking up journal policy rather than research practice, while Reviewer 2 focuses more on the need for a tighter protocol and a clearer analysis plan. The manuscript is well written, but some of the language in the abstract and Methods section is imprecise, and that lack of precision contributes to the larger conceptual problem.

Recommendations from the editor

The main revision needed is a clearer match between the paper’s framing and what the proposed design can actually show. At the moment, the study seems able to examine the explicit reporting of exploratory analyses but not the actual frequency or value of exploratory research. The abstract and introduction should reflect that. Additionally, it should not be implied that the study will test whether preregistration restricts creativity, discovery, or serendipity unless those outcomes are going to be measured directly.

The manuscript would benefit from an explicit coding protocol that spells out how raters will identify exploratory analyses and what counts as sufficient evidence that an analysis was unplanned. It is difficult to assess whether this important assessment is valid based on the text in the manuscript alone. Both reviewers already indicated some concerns about this validity. Moreover, the coding plan seems to focus mainly on the Introduction, Results/Data Analysis, and Discussion, but in psychology papers, analytic decisions are often not presented in a separate data analysis section but folded into the Methods section. As a practical adjustment, I think it would make sense to also code those parts of the Methods section that describe the analysis plan, even when they are not explicitly labeled as such.

I would also encourage the authors to reconsider the decision to code at the article level rather than the study level, especially in multi-study papers that may mix preregistered and non-preregistered components. That choice currently weakens the inference the paper wants to make. As reviewer #2 suggests, it may even be informative to do a within-paper analysis, comparing preregistered and non-preregistered studies that way.

A final issue, not mentioned by the reviewers, is the sampling frame. Because the study starts from journals known to publish Registered Reports, the final sample may end up drawing disproportionately from journals that are already more committed to transparency reforms. That does not make the design inappropriate, but it does limit how broadly the findings can be interpreted. One possible way to reduce this problem would be to relax the requirement that companion articles come from the same journal as the Registered Report, although I recognize that doing so also has its downsides.

Peer review 1

Major comments

- I am not fully convinced by the manuscript’s framing of its contribution to the debate over whether preregistration hinders exploratory research. The authors position the study as informing this broader discussion, but the proposed design seems able to support conclusions only about reporting practices but not research practice itself. This is important because critiques of preregistration typically concern not just whether exploratory work is conducted (or reported), but whether preregistration constrains creativity and novelty and ultimately hinders the progress of science. Assessing disclosure about exploratory research without also examining these related dimensions therefore seems incomplete.

- By the way, the reverse inference is also problematic: even if non-preregistered articles disclose exploratory analyses, this does not mean that the remaining analyses are genuinely confirmatory. This issue is, at present, not addressed in the manuscript.

- More broadly, the proposed design appears to conflate research practice with reporting standards. Registered Reports may show a higher frequency of exploratory analyses simply because journals often require such analyses to be reported in a dedicated section, whereas comparable guidance may not exist for regular articles. For the focal sample and the most common journals, it would therefore be important to discuss and, if possible, analyze the relevant reporting guidelines, while taking into account that these policies may have changed over time. For example, the submission guidelines for Psychological Science state for Registered Reports: “It is reasonable that authors may wish to include additional analyses that were not included in the registered submission. For instance, a new analytic approach might become available between IPA and Stage 2 review, or a particularly interesting and unexpected finding may emerge. Such analyses are admissible but must be clearly justified in the text, appropriately caveated, and reported in a separate section of the Results titled ‘Exploratory analyses’.” As far as I can tell, this recommendation does not appear in the same way for regular manuscripts. This raises the question whether the study measures researcher behavior (bottom-up research practice), or simply adherence to journal policy (top-down reporting requirements)? The authors should account for differences across journals and reporting guidelines (also in their statistical model), as any differences across article types will be difficult to interpret otherwise.

- For the same reason, the manuscript would be more convincing if it also studied the hypothesized consequences of discouraging exploratory research. The Introduction notes the criticism that preregistration may hinder creativity and reduce novelty, and these are precisely the kinds of outcomes assessed by Soderberg et al. (2021) and O’Mahony. The study would therefore be stronger, and better aligned with the concern raised in the Introduction, if it assessed not only whether exploratory analyses are disclosed, but also how novel, creative, rigorous, and transparent the resulting research is.

- Overall, the paper currently reads as a replication of one of O’Mahony’s hypotheses, with the extension to include regular preregistrations. This should be stated more clearly in the Abstract and Introduction. Although such a replication may well be worthwhile, the present proposal would, in my view, require substantial methodological improvement to justify publication. For instance, O’Mahony distinguished exploratory analyses reported in the paper in relation to the studies actually conducted, whereas the justification offered here for coding at the paper level is not yet convincing. In addition, O’Mahony coded several dimensions that seem at least as important as reporting standards alone, including methodological rigour, novelty, creativity, and transparency. At present, the manuscript appears to treat the presence of reported exploratory analyses as a proxy for these broader constructs without measuring them directly, even though they could in principle be incorporated into the coding scheme. If, instead, the authors are primarily interested in reporting practices across publication formats rather than the substantive quality of the research, then it may be worth broadening the coding scheme to include other reporting-related features as well, such as clarity of hypothesis specification, transparency regarding data, materials, and code, etc. In the latter case, this would require a reframing of the Introduction to reflect this shift in focus.

- Finally, I do not find the rationale for disregarding preregistration quality convincing. As the authors note, preregistrations can vary substantially in quality, and this variation may plausibly moderate how rigorously exploratory analyses are reported. Treating all preregistrations as equivalent therefore seems difficult to justify, particularly in light of existing evidence that preregistrations differ considerably from one another.

Minor comments:

- It is currently somewhat unclear how the dependent variable, that is the presence of exploratory research, will be operationalized. O’Mahony used a proportion score based on the exploratory analyses documented in each paper and the proportion of analyses where this distinction was clearly made. My understanding of the present proposal is that each article will simply be coded for whether exploratory research is reported, which would make the outcome variable binary. The authors should clarify whether this interpretation is correct.

- As written, the claim that “the function that preregistration is meant to serve is not always clear or consistent” seems too broad if it is mainly based on a preprint by Lakens (2019). Much of the literature describes the function of preregistration quite consistently as distinguishing confirmatory from exploratory research. For example, Nosek et al. (2018) state in the abstract that preregistration is “an effective solution” because it “distinguishes analyses and outcomes that result from predictions from those that result from postdictions.” Similarly, Munafò et al. (2017) write that preregistration “makes clear the distinction between data-independent confirmatory research … and data-contingent exploratory research.” Parsons et al. (2022) likewise define preregistration as a practice that “aims to clearly distinguish confirmatory from exploratory research.” The authors may therefore wish either to qualify this claim and/or clearly attribute it to the single source (i.e., Lakens, 2019) who claims that there is disagreement about the function of preregistration.

- The authors may wish to discuss additional relevant literature alongside the thesis by O’Mahony. Although Soderberg et al. (2021; https://doi.org/10.1038/s41562-021-01142-4) did not examine exploratory work directly, they did evaluate the novelty, creativity, and quality of Registered Reports relative to standard publishing models, and these findings seem directly relevant here. Wagenmakers et al. (2018; https://doi.org/10.1177/1745691618771357) may also warrant discussion, as it provides a useful historical overview of the relationship between creativity and verification.

- The OSF materials appear to be incomplete and do not currently include the analysis script.

- The abstract contains an incomplete sentence (“The purpose of the present study is to find out.”) and should be revised to state the study aim clearly.

- When preregistration is first mentioned in the Introduction, the authors may wish to note that it was proposed as early as 2012 and cite Wagenmakers et al. 2012; https://doi.org/10.1177/1745691612463078). Similarly, when introducing Registered Reports, Chambers (2013; https://doi.org/10.1016/j.cortex.2012.12.016) would be a more appropriate reference than Besançon et al. (2021).

Peer review 2

It was my pleasure to read and review this registered report.

After reading the report, I unfortunately come to the recommendation not to publish this work (in its current state). The criterion I use is the one that is described in the paper: “he Stage 1 manuscript and review process, addressing the question: will this study produce worthwhile knowledge, regardless of the results?” In my opinion the study, as currently planned and described, will not produce worthwhile knowledge. I provide my reasoning below under ‘major comments’.

However, I hope the authors are willing to adapt their proposed work, as I believe it may produce some worthwhile knowledge when (strongly) adapted.

I also provide some minor comments.

Major comments

- Claim and framing. Your abstract and your paper state that your research is about the frequency and value of exploratory research:

(a) Most importantly, your research is NOT about the frequency of exploratory research. It currently is about the the reader being able to identify whether research is exploratory or not. This is something very different. Research that is not preregistered does not identify or label research as exploratory or confirmatory, as this distinction is not relevant or cannot be made – I would say almost all of this research is exploratory as ideas and hypotheses are either not formulated before the research or adapted during the research. While O’Mahony reports a rate of 57% disclosure of exploratory research, she also emphasizes that the coding process was subjective and difficult.

Hence, I think the aim needs to be reframed into examining the frequency of the explicit referring to exploratory research rather than the frequency of exploratory research per se.

The framing is then also different, for instance, underlining that it is crucial for the reader to being able to distinguish between exploratory and confirmatory research (as p-values have no clear meaning in exploratory research), to interpret a finding.

(b) The abstract is a bit misleading. Citing from it: “reduce the frequency and value of exploratory research, and therefore restrict creativity, serendipity, and discovery” … “formally examine whether these concerns have any merit”. The use of “these” is misleading, as you do not examine “value of exploratory research, and therefore restrict creativity, serendipity, and discovery”, you only attempt to examine the frequency.

(2) Definition and protocol of exploratory research. On page 6 you define “… exploratory as, “any analysis that is indicated to not be a planned test of a specific hypothesis,””. Given this definition, I expected a protocol for ‘indicated not be a planned test of a specific hypothesis’. Please provide the protocol. For projects like this, and their reproducibility, a clear and objective protocol is essential. Without such a protocol (i) results will not be reproducible, and (ii) results are more difficult to interpret.

To make the protocol more objective and in line with my idea of the research (i.e., the explicit reference to exploratory research), I would use keywords like ‘plann*’ and ‘explor*’ and words that resemble it. This also will make coding easier and less subjective. Particularly because most published research does not list hypotheses or distinguish between planned and exploratory research.

(3) Hypotheses. Please explicitly list your planned hypotheses, also with “H1: …”, “H2:…” etc. Improves the quality of your report, makes clearer what you are doing. We teach our students to do this, but we researchers should do it more in our research too.

Please also take care of your formulation on p7. It is not about “greater reports” but about the prevalence of exploratory research.

Do you or do you not have a hypothesis about registered reports? This is not clear from your intro, as hypotheses are not explicitly listed. And do you have a hypothesis about comparing registered reports to ‘normal’ articles? It is not clear from the text if your hypothesis is of the from A < B < C, which implies three hypotheses on pairwise comparisons, or something else.

Your exploratory hypotheses on p8 are also not clear to me. Do they concern the explicit use of exploratory in ALL published articles (i.e., unconditional) or in registered research (i.e., conditional)? Consider making it explicit.

(4) Ideal registered report. For me, the ideal registered report is one where one simply adds the result section to an existing intro and methods section that is published in Stage 1. Although imo the introduction is quite good, it is not yet a full introduction of the paper (i.e., needs to be adapted). This depends on the journal, what are its criteria for a registered report.

(5) What is a preregistration? On page 9 you define a preregistered paper as a paper with an “accessible link”. Please make this definition more meaningful. I can imagine accessible links to files that do not qualify as preregistrations.

(6) Included studies. You wish to include any type of empirical studies, including qualitative, mixed methods, and meta-analyses. I understand, but (i) some designs (e.g., experimental) are more often preregistered than others, and (ii) results of these different designs may be different, and (iii) coding may be more cumbersome when dealing with more different designs. Because of these reasons I would understand if you focus on just ONE design, for instance, experimental designs. I think that this will also increase the statistical power of your design, as you control for possible confounding factors this way.

If you want to stick to your original choice, then this is of course fine, but it would be great if you can motivate your choice more.

(7) Article coding. You write on page 12 that ‘coding will be done at the article level rather than individual studies within articles’. To me, this sounds like an awful idea. I strongly recommend to code only the preregistered studies as preregistered, and not also the non-preregistered studies in the article. Actually, I think an excellent test of your hypothesis would be to compare preregistered studies and non-preregistered studies in the same article. Within-subject (in your case ‘within-article’) studies provide more control and more power for testing your hypotheses. You can then also compare if, within the same paper, authors distinguish more between exploratory and planned in preregistered studies than in non-preregistered studies.

(8) Rater training and reliability. Great that you include training. But why only six articles? Particularly if you include multiple different designs, six is not much imo. Why not 10 in the first stage? First one needs to develop a clear protocol, and then this protocol is tested in the first stage. Then, the protocol can be adapted, and a new batch can be coded, etc.

On page 15 your write “if agreement exceeds … then the training phase will be complete”. I recommend not to include this rule, as it is an option that agreement never reaches this threshold. Most important is that you do your best with developing the protocol and training, until agreement cannot be improvement much, independent of how much this agreement is.

You also write “Perfect agreement will be assessed…”. What does that mean?

(9) Statistical analyses I did not understand the statistical analyses (planned hypotheses). First, they seemed to be concerned with comparing frequencies, and then a chi2-test is an option. But then you write about ‘comparing averages’. What averages? And averages cannot be compared using a chi2-test. I had difficulties understanding this complete section.

Please make sure that you make clear how you test each of the explicitly tested hypotheses in the intro. Ideally, you also provide the statistical code for these tests.

(10) Power analyses On page 12 you state that “the effect size in O’Mahony (2023) is not reported’. However, earlier in your report you provide the prevalences, with which you can calculate the effect size. The difference between 75 and 57 percent is not 20 percent, so this part confused me.

Please make sure that the power analyses match the statistical tests used for testing the hypotheses (see (9) above).

Perhaps it makes more sense to write the power analysis section following the planned analyses section, as you must describe the statistical analyses first. But perhaps the journal specified this order?

Minor comments

“an accessible and” (p5): consider inserting “supposedly” between ‘a’ and ‘accessible’.

You use “1” twice on page 7 when listing statements. Note that it is sufficient to either explicitly state the H0 or the H1.

I would understand it if my review were perceived as unfavourable and undesired news. Sorry for that. However, I sincerely hope the authors can use my review to improve their research proposal, as more research is needed on this topic.

Keep up the good work!

Best wishes,

Marcel van Assen (I always sign my reviews)

Author response

As a preface to our comments, we want to highlight the context of this research project. We submitted this research plan as part of Lifecycle Journal’s pilot program that is focused on assessing the usability and feasibility of the service. This program has strict deadlines. The research plan had to be submitted by Dec 1, 2025, with a 60-day evaluation window, and the final outcomes report must be submitted by Sep 1, 2026. This is a very tight timeline, and so we proposed a small project that would be achievable within those constraints. The tight timeline was exacerbated by an extended evaluation process–instead of 60 days from submission, we had all evaluations in hand approximately 135 days after submission.

We offer this explanation up front as a partial rationale for why we declined to take many reviewer suggestions related to expanding the scope of the project. We simply do not have time or capacity to do so. Although this is never a sufficient scientific explanation, we maintain that our planned project, following the revisions based on the helpful comments, will nevertheless provide informative data, even if on a narrow question. Moreover, the dataset of articles we are assembling will have high reuse potential for metascientists, and some of the suggested expansions could certainly be pursued by us or others down the line.

Finally, following submission of this revised research plan we will formally register the project and begin, and thus the submitted version is the final version of the research plan. Accordingly, whereas we are happy to receive additional comments on the revised plan, we will unfortunately not be able to take any of them into consideration as part of the preregistered plan (we could, of course, incorporate some of them as unregistered aspects).

Editorial Assessment

I much appreciated the detailed explanations for the decisions made in the design, which made it much easier to arrive at an informed assessment. That level of detail also seems to have helped the reviewers provide the elaborate feedback they did. Both reviewers agree that the manuscript takes up an important question, but both also think the current proposal does not yet support the claims it wants to make. In particular, the reviewers think that the study can speak to how exploratory analyses are reported or labeled in published papers, but not to whether preregistration and Registered Reports actually reduce exploratory research itself, or diminish creativity, novelty, and serendipity. They also raise related methodological concerns: the definition of exploratory research is still too loose for reliable coding, the coding plan and statistical analyses are not yet sufficiently specified, and some design choices (coding at the article rather than study level, ignoring differences in preregistration quality, and not accounting for journal-specific reporting requirements) make the eventual interpretation of the results difficult. The reviewers are largely aligned on these central points. However, reviewer 1 puts more weight on the broader framing of the debate and on the possibility that the study is really picking up journal policy rather than research practice, while Reviewer 2 focuses more on the need for a tighter protocol and a clearer analysis plan. The manuscript is well written, but some of the language in the abstract and Methods section is imprecise, and that lack of precision contributes to the larger conceptual problem.

Author response: Thank you, we are glad to hear you appreciated the detail. We are grateful for the reviewers’ detailed and helpful feedback, and provide full responses below following the reviewer comments.

Recommendations from the editor

The main revision needed is a clearer match between the paper’s framing and what the proposed design can actually show. At the moment, the study seems able to examine the explicit reporting of exploratory analyses but not the actual frequency or value of exploratory research. The abstract and introduction should reflect that. Additionally, it should not be implied that the study will test whether preregistration restricts creativity, discovery, or serendipity unless those outcomes are going to be measured directly.

Author response: We have now clarified the framing and the scope of the project throughout the text, making it clear that we are analyzing reports of exploratory research vs. the actual practice, per se. We have also added the following to the paper, explaining why we are taking this approach and how there is not really any good alternative:

“A major challenge to drawing any conclusions about the frequency of exploratory research between registered and non-registered articles is that the ability to do so is entirely dependent on researchers’ reporting. Given the widespread prevalence of HARKing, in which exploratory findings are reframed and presented as confirmatory, it is simply not possible to derive an accurate estimate of how often exploratory research is occurring when there are no reporting restrictions in place (Wagenmakers et al., 2018). Thus, when making such comparisons, examining the reporting practices in articles is the best available indicator. Although reports are an imperfect proxy for actual practice, they remain highly relevant to the debate about the status of exploration in registered articles, and specifically address the claims that registration would restrict exploratory research. That is, if registration does in fact restrict exploration, there should be low levels of exploration reported in research articles.”

The manuscript would benefit from an explicit coding protocol that spells out how raters will identify exploratory analyses and what counts as sufficient evidence that an analysis was unplanned. It is difficult to assess whether this important assessment is valid based on the text in the manuscript alone. Both reviewers already indicated some concerns about this validity. Moreover, the coding plan seems to focus mainly on the Introduction, Results/Data Analysis, and Discussion, but in psychology papers, analytic decisions are often not presented in a separate data analysis section but folded into the Methods section. As a practical adjustment, I think it would make sense to also code those parts of the Methods section that describe the analysis plan, even when they are not explicitly labeled as such.

Author response: The full coding protocol is now included in the OSF page. And yes, we agree the descriptions of the analytic plan sometimes appear in the Method section, and so we will review those sections as well.

I would also encourage the authors to reconsider the decision to code at the article level rather than the study level, especially in multi-study papers that may mix preregistered and non-preregistered components. That choice currently weakens the inference the paper wants to make. As reviewer #2 suggests, it may even be informative to do a within-paper analysis, comparing preregistered and non-preregistered studies that way.

Author response: Indeed, this decision evoked strong reactions from the reviewers. We continue to believe that our rationale for coding at the article level is sound, but it is obvious that others do not see it that way. Accordingly, we have revised our inclusion criteria to only consider articles where all studies are preregistered. We will then conduct exploratory within-article examinations of the articles that consist of registered and non-registered studies. Our preliminary article screening has indicated that mixed Registered Reports constitute only a small number of articles, so there are unlikely enough to conduct any hypothesis tests. Excluding them reduces heterogeneity in the focal set, while the exploratory analysis could provide some interesting insights.

A final issue, not mentioned by the reviewers, is the sampling frame. Because the study starts from journals known to publish Registered Reports, the final sample may end up drawing disproportionately from journals that are already more committed to transparency reforms. That does not make the design inappropriate, but it does limit how broadly the findings can be interpreted. One possible way to reduce this problem would be to relax the requirement that companion articles come from the same journal as the Registered Report, although I recognize that doing so also has its downsides.

Author response: We agree that this is a potential limitation. Selecting journals/articles and matches is difficult, and always involves some trade-offs. On balance, we believe it is critical to select matches from the same journals and timeframes, given the variations in journal policies (as identified by the reviewers). As a way to check the implications on our decision, we have added a small sample of matched traditional articles from journals that do not publish Registered Reports. Doing so will provide some indication of the representativeness of the journals selected.

Review #1

Major comments

I am not fully convinced by the manuscript’s framing of its contribution to the debate over whether preregistration hinders exploratory research. The authors position the study as informing this broader discussion, but the proposed design seems able to support conclusions only about reporting practices but not research practice itself. This is important because critiques of preregistration typically concern not just whether exploratory work is conducted (or reported), but whether preregistration constrains creativity and novelty and ultimately hinders the progress of science. Assessing disclosure about exploratory research without also examining these related dimensions therefore seems incomplete.

Author response: As noted in our response to the Editor, we are now clearer about the scope and about why we focus on reporting practices. We are now clearer in the paper that we are narrowly focused on reports of exploratory research.

By the way, the reverse inference is also problematic: even if non-preregistered articles disclose exploratory analyses, this does not mean that the remaining analyses are genuinely confirmatory. This issue is, at present, not addressed in the manuscript.

Author response: Yes, we agree, and made no claims about prevalence of confirmatory analyses. This continues to be the case in the revision.

More broadly, the proposed design appears to conflate research practice with reporting standards. Registered Reports may show a higher frequency of exploratory analyses simply because journals often require such analyses to be reported in a dedicated section, whereas comparable guidance may not exist for regular articles. For the focal sample and the most common journals, it would therefore be important to discuss and, if possible, analyze the relevant reporting guidelines, while taking into account that these policies may have changed over time. For example, the submission guidelines for Psychological Science state for Registered Reports: “It is reasonable that authors may wish to include additional analyses that were not included in the registered submission. For instance, a new analytic approach might become available between IPA and Stage 2 review, or a particularly interesting and unexpected finding may emerge. Such analyses are admissible but must be clearly justified in the text, appropriately caveated, and reported in a separate section of the Results titled ‘Exploratory analyses’.” As far as I can tell, this recommendation does not appear in the same way for regular manuscripts. This raises the question whether the study measures researcher behavior (bottom-up research practice), or simply adherence to journal policy (top-down reporting requirements)? The authors should account for differences across journals and reporting guidelines (also in their statistical model), as any differences across article types will be difficult to interpret otherwise.

Author response: Thank you for raising this important point. We agree about the expectation of reporting for Registered Reports, which is one reason we are including preregistered articles. It is, unfortunately, not feasible to examine how journal policies have changed over time and to map that to specific articles, as journal policies are updated often and rarely archived. As a way to partially address this concern, we will code for current journal policies, as now described in the manuscript, and conduct exploratory analyses on their potential relation. Moreover, in response to the comments from PaperWizard, all analyses will be clustered by journal, so at least we can account for journal-level variation.

For the same reason, the manuscript would be more convincing if it also studied the hypothesized consequences of discouraging exploratory research. The Introduction notes the criticism that preregistration may hinder creativity and reduce novelty, and these are precisely the kinds of outcomes assessed by Soderberg et al. (2021) and O’Mahony. The study would therefore be stronger, and better aligned with the concern raised in the Introduction, if it assessed not only whether exploratory analyses are disclosed, but also how novel, creative, rigorous, and transparent the resulting research is.

Author response: We agree that doing this would be informative. As noted at the outset of this letter, this current project is small scale, focused, and on a very tight external deadline. We believe that generating data related to the narrow claim about registration is still useful. Moreover, there are not good measures available to assess creativity and novelty in these kinds of articles. Soderberg et al. relied on single-item assessments with no descriptive text, and O’Mahony did not assess creativity and novelty. Thus, we will indicate this as a limitation and a useful direction for follow-up work.

Overall, the paper currently reads as a replication of one of O’Mahony’s hypotheses, with the extension to include regular preregistrations. This should be stated more clearly in the Abstract and Introduction. Although such a replication may well be worthwhile, the present proposal would, in my view, require substantial methodological improvement to justify publication. For instance, O’Mahony distinguished exploratory analyses reported in the paper in relation to the studies actually conducted, whereas the justification offered here for coding at the paper level is not yet convincing. In addition, O’Mahony coded several dimensions that seem at least as important as reporting standards alone, including methodological rigour, novelty, creativity, and transparency. At present, the manuscript appears to treat the presence of reported exploratory analyses as a proxy for these broader constructs without measuring them directly, even though they could in principle be incorporated into the coding scheme. If, instead, the authors are primarily interested in reporting practices across publication formats rather than the substantive quality of the research, then it may be worth broadening the coding scheme to include other reporting-related features as well, such as clarity of hypothesis specification, transparency regarding data, materials, and code, etc. In the latter case, this would require a reframing of the Introduction to reflect this shift in focus.

Author response: We agree that these are all good ideas, but seek to keep the scope limited to the narrow question of interest. We have now more clearly indicated that the study is a replication and extension of O’Mahony. Contrary to the reviewer’s claims, O’Mahony did not assess creativity and novelty.

Finally, I do not find the rationale for disregarding preregistration quality convincing. As the authors note, preregistrations can vary substantially in quality, and this variation may plausibly moderate how rigorously exploratory analyses are reported. Treating all preregistrations as equivalent therefore seems difficult to justify, particularly in light of existing evidence that preregistrations differ considerably from one another.

Author response: We agree that examining the contents of the preregistrations would be informative, but we have decided against doing so for two primary reasons. First, it is simply not feasible to do so within the constraints of the project. Second, it is not entirely clear how to think about registration quality in relation to our research questions. As this reviewer and others have noted, we are examining reporting in the articles, and that is useful regardless of registration quality. Moreover, undisclosed deviations from registrations do not really represent exploratory research in its intended sense, but rather are much more likely to represent explorations that were reframed as confirmatory. Although this is of course a type of exploration, it represents something qualitatively different from direct reports of exploratory work.

Minor comments:

It is currently somewhat unclear how the dependent variable, that is the presence of exploratory research, will be operationalized. O’Mahony used a proportion score based on the exploratory analyses documented in each paper and the proportion of analyses where this distinction was clearly made. My understanding of the present proposal is that each article will simply be coded for whether exploratory research is reported, which would make the outcome variable binary. The authors should clarify whether this interpretation is correct.

Author response: We have clarified the coding process in the manuscript and in the appended protocol. It is true that O’Mahony used a proportion score, but the analysis reported just before that used a binary presence rating such as the one we had proposed. As our focus is only on exploratory research, we only include the presence ratings.

As written, the claim that “the function that preregistration is meant to serve is not always clear or consistent” seems too broad if it is mainly based on a preprint by Lakens (2019). Much of the literature describes the function of preregistration quite consistently as distinguishing confirmatory from exploratory research. For example, Nosek et al. (2018) state in the abstract that preregistration is “an effective solution” because it “distinguishes analyses and outcomes that result from predictions from those that result from postdictions.” Similarly, Munafò et al. (2017) write that preregistration “makes clear the distinction between data-independent confirmatory research … and data-contingent exploratory research.” Parsons et al. (2022) likewise define preregistration as a practice that “aims to clearly distinguish confirmatory from exploratory research.” The authors may therefore wish either to qualify this claim and/or clearly attribute it to the single source (i.e., Lakens, 2019) who claims that there is disagreement about the function of preregistration.

Author response: We agree, as noted in the paper, that the exploratory/confirmatory distinction is a primary rationale for preregistration. But it is well-documented that it is not the only rationale. This is well covered in Lakens (2019) as well as Syed (2024), the latter of which briefly summarizes these different rationales:

“One of the challenges of understanding preregistration—and the criticisms of it—are that there are different rationales for why researchers should do it. These include clearly distinguishing between what decisions were made prior to seeing the data (“confirmatory” analyses) from what decisions were made after seeing the data (“exploratory” analyses), and preventing the latter as being framed as the former in a research report ; reducing the prevalence of undisclosed data-dependent decision-making ; evaluating the severity of a test ; and serving as formal documentation of the study design and analysis plan.”

Additionally, we do not see why a single source, which we do not rely on anyway, is a problem, nor is relying on a preprint (as it happens, this was an error in our Zotero library; the paper is indeed published, and this has been updated). Accordingly, we have not made any changes in response to this comment.

The authors may wish to discuss additional relevant literature alongside the thesis by O’Mahony. Although Soderberg et al. (2021; https://doi.org/10.1038/s41562-021-01142-4) did not examine exploratory work directly, they did evaluate the novelty, creativity, and quality of Registered Reports relative to standard publishing models, and these findings seem directly relevant here. Wagenmakers et al. (2018; https://doi.org/10.1177/1745691618771357) may also warrant discussion, as it provides a useful historical overview of the relationship between creativity and verification.

Author response: Thank you, we have added reference to these articles.

The OSF materials appear to be incomplete and do not currently include the analysis script.

Author response: Thank you, the scripts are now available as indicated in the manuscript.

The abstract contains an incomplete sentence (“The purpose of the present study is to find out.”) and should be revised to state the study aim clearly.

Author response: We have revised the sentence for clarity.

When preregistration is first mentioned in the Introduction, the authors may wish to note that it was proposed as early as 2012 and cite Wagenmakers et al. 2012; https://doi.org/10.1177/1745691612463078). Similarly, when introducing Registered Reports, Chambers (2013; https://doi.org/10.1016/j.cortex.2012.12.016) would be a more appropriate reference than Besançon et al. (2021).

Author response: Both of those articles were already cited very early on in the Introduction. We realized that the citation placement of Besançon et al. (2021) was a bit misleading, so have made edits to clarify.

Review #2

It was my pleasure to read and review this registered report.

After reading the report, I unfortunately come to the recommendation not to publish this work (in its current state). The criterion I use is the one that is described in the paper: “he Stage 1 manuscript and review process, addressing the question: will this study produce worthwhile knowledge, regardless of the results?” In my opinion the study, as currently planned and described, will not produce worthwhile knowledge. I provide my reasoning below under ‘major comments’.

However, I hope the authors are willing to adapt their proposed work, as I believe it may produce some worthwhile knowledge when (strongly) adapted.

I also provide some minor comments.

Author response: We appreciate the helpful feedback!

Major comments

Claim and framing. Your abstract and your paper state that your research is about the frequency and value of exploratory research:

(a) Most importantly, your research is NOT about the frequency of exploratory It currently is about the the reader being able to identify whether research is exploratory or not. This is something very different. Research that is not preregistered does not identify or label research as exploratory or confirmatory, as this distinction is not relevant or cannot be made – I would say almost all of this research is exploratory as ideas and hypotheses are either not formulated before the research or adapted during the research. My idea is supported by the results of the dissertation of O’Mahony, which did or could not identify exploratory research in non-preregistered research.

Hence, I think the aim needs to be reframed into examining the frequency of the explicit referring to exploratory research rather than the frequency of exploratory research per se. The framing is then also different, for instance, underlining that it is crucial for the reader to being able to distinguish between exploratory and confirmatory research (as p-values have no clear meaning in exploratory research), to interpret a finding.

Author response: As noted above, we have addressed this major concern. We will note, however, that the reviewer is incorrect about O’Mahony’s findings. It is not true that the study “did or could not identify exploratory research in non-preregistered research.” Rather, they found a rate of 57% reported exploratory research in non-registered research.

(b) The abstract is a bit misleading. Citing from it: “reduce the frequency and value of exploratory research, and therefore restrict creativity, serendipity, and discovery” … “formally examine whether these concerns have any merit”. The use of “these” is misleading, as you do not examine “value of exploratory research, and therefore restrict creativity, serendipity, and discovery”, you only attempt to examine the frequency.

Author response: We have revised the abstract to clarify.

(2) Definition and protocol of exploratory research. On page 6 you define “… exploratory as, “any analysis that is indicated to not be a planned test of a specific hypothesis,””. Given this definition, I expected a protocol for ‘indicated not be a planned test of a specific hypothesis’. Please provide the protocol. For projects like this, and their reproducibility, a clear and objective protocol is essential. Without such a protocol (i) results will not be reproducible, and (ii) results are more difficult to interpret.

Author response: We now add a link to the full coding protocol. Importantly, there is no fully reproducible approach that can be used for this question. As we describe in the manuscript and in the manual, there is necessarily some subjectivity involved given that context must be taken into account. However, more clearly laid out coding procedures do indeed help with interpretation, and we will also make all of the articles and our coding openly available.

To make the protocol more objective and in line with my idea of the research (i.e., the explicit reference to exploratory research), I would use keywords like ‘plann*’ and ‘explor*’ and words that resemble it. This also will make coding easier and less subjective. Particularly because most published research does not list hypotheses or distinguish between planned and exploratory research.

Author response: Thank you, we have added several search terms in the coding protocol. As noted, this process will inherently involve some subjectivity.

(3) Hypotheses. Please explicitly list your planned hypotheses, also with “H1: …”, “H2:…” etc. Improves the quality of your report, makes clearer what you are doing. We teach our students to do this, but we researchers should do it more in our research too.

Author response: Thank you, this point is well taken, and the hypotheses are now more clearly labeled at the end of the Introduction and when discussing the analyses.

Please also take care of your formulation on p7. It is not about “greater reports” but about the prevalence of exploratory research.

Author response: We agree that this formulation is more accurate and have edited the paper throughout.

Do you or do you not have a hypothesis about registered reports? This is not clear from your intro, as hypotheses are not explicitly listed. And do you have a hypothesis about comparing registered reports to ‘normal’ articles? It is not clear from the text if your hypothesis is of the from A < B < C, which implies three hypotheses on pairwise comparisons, or something else.

Author response: Thank you, we have reformulated the hypotheses to make our specific predictions clear.

Your exploratory hypotheses on p8 are also not clear to me. Do they concern the explicit use of exploratory in ALL published articles (i.e., unconditional) or in registered research (i.e., conditional)? Consider making it explicit.

Author response: The planned exploratory tests have now been clarified.

(4) Ideal registered report. For me, the ideal registered report is one where one simply adds the result section to an existing intro and methods section that is published in Stage 1. Although imo the introduction is quite good, it is not yet a full introduction of the paper (i.e., needs to be adapted). This depends on the journal, what are its criteria for a registered report.

Author response: We aim to keep the Introduction as brief as possible. We have revised it based on the comments, but otherwise have not added any additional information.

(5) What is a preregistration? On page 9 you define a preregistered paper as a paper with an “accessible link”. Please make this definition more I can imagine accessible links to files that do not qualify as preregistrations.

Author response: We have revised the criteria to read as follows: “An empirical article in which a) all studies are stated to be preregistered and b) for which the registration document can be located (either via an included link in the manuscript or via manual search). Because of the wide variety in implementation quality, we will not require that the registration document has been formally registered, as long as it can be located (e.g., a registration document is on the OSF project page, but not registered).”

(6) Included studies. You wish to include any type of empirical studies, including qualitative, mixed methods, and meta-analyses. I understand, but (i) some designs (e.g., experimental) are more often preregistered than others, and (ii) results of these different designs may be different, and (iii) coding may be more cumbersome when dealing with more different designs. Because of these reasons I would understand if you focus on just ONE design, for instance, experimental designs. I think that this will also increase the statistical power of your design, as you control for possible confounding factors this way. If you want to stick to your original choice, then this is of course fine, but it would be great if you can motivate your choice more.

Author response: There is no reason to expect exploratory analyses would be more frequent in experimental vs. observational work, so we do not think it is important to restrict inclusion based on these types of designs. However, exploratory analyses could be different for qualitative, mixed methods, and meta-analytic studies, so we will now exclude these from the analysis.

(7) Article coding. You write on page 12 that ‘coding will be done at the article level rather than individual studies within articles’. To me, this sounds like an awful idea. I strongly recommend to code only the preregistered studies as preregistered, and not also the non-preregistered studies in the article. Actually, I think an excellent test of your hypothesis would be to compare preregistered studies and non-preregistered studies in the same article. Within-subject (in your case ‘within-article’) studies provide more control and more power for testing your hypotheses. You can then also compare if, within the same paper, authors distinguish more between exploratory and planned in preregistered studies than in non-preregistered studies.

Author response: As already indicated, we have altered the design to restrict the primary analysis to non-mixed articles and then conduct exploratory within-article analyses on the mixed articles.

(8) Rater training and reliability. Great that you include training. But why only six articles? Particularly if you include multiple different designs, six is not much imo. Why not 10 in the first stage? First one needs to develop a clear protocol, and then this protocol is tested in the first stage. Then, the protocol can be adapted, and a new batch can be coded, etc. On page 15 your write “if agreement exceeds … then the training phase will be complete”. I recommend not to include this rule, as it is an option that agreement never reaches this threshold. Most important is that you do your best with developing the protocol and training, until agreement cannot be improvement much, independent of how much this agreement is. You also write “Perfect agreement will be assessed…”. What does that mean?

Author response: In our many years of coding open-ended text and article data we have found that the detailed procedure works well, so we have stayed with the plan as described. We believe the reviewer may have misread the text, as the sentence in question reads “percent” not “perfect.”

(9) Statistical analyses. I did not understand the statistical analyses (planned hypotheses). First, they seemed to be concerned with comparing frequencies, and then a chi2-test is an option. But then you write about ‘comparing averages’. What averages? And averages cannot be compared using a chi2-test. I had difficulties understanding this complete section. Please make sure that you make clear how you test each of the explicitly tested hypotheses in the intro. Ideally, you also provide the statistical code for these tests.

Author response: This section has been completely overhauled, clearly indicating how each hypothesis is being tested, with links to the analytic code.

(10) Power analyses. On page 12 you state that “the effect size in O’Mahony (2023) is not reported’. However, earlier in your report you provide the prevalences, with which you can calculate the effect size. The difference between 75 and 57 percent is not 20 percent, so this part confused me.

Author response: That sentence meant that the effect size phi was not reported, we have now edited the sentence for clarity. Regarding the percent difference, the sentence reads, “This corresponded to an approximate difference in prevalence of 20%” The actual difference was 18%, and in this sentence were providing an approximation. In any case, this sentence has been removed through revision of the power analysis.

Please make sure that the power analyses match the statistical tests used for testing the hypotheses (see (9) above).

Author response: This section has been completely overhauled, and the power analysis for each test is indicated after the test is described.

Perhaps it makes more sense to write the power analysis section following the planned analyses section, as you must describe the statistical analyses first. But perhaps the journal specified this order?

Author response: As noted, the power analysis for each test is now indicated following the description of the test.

Minor comments

“an accessible and” (p5): consider inserting “supposedly” between ‘a’ and ‘accessible’.

Author response: Changed

You use “1” twice on page 7 when listing statements. Note that it is sufficient to either explicitly state the H0 or the H1.

Author response: The section describing the hypotheses has been completely revised.

I would understand it if my review were perceived as unfavourable and undesired news. Sorry for that. However, I sincerely hope the authors can use my review to improve their research proposal, as more research is needed on this topic.

Keep up the good work! Best wishes,

Marcel van Assen (I always sign my reviews)

Author response: We appreciate the feedback, and the transparency, and believe the comments have greatly improved the project.